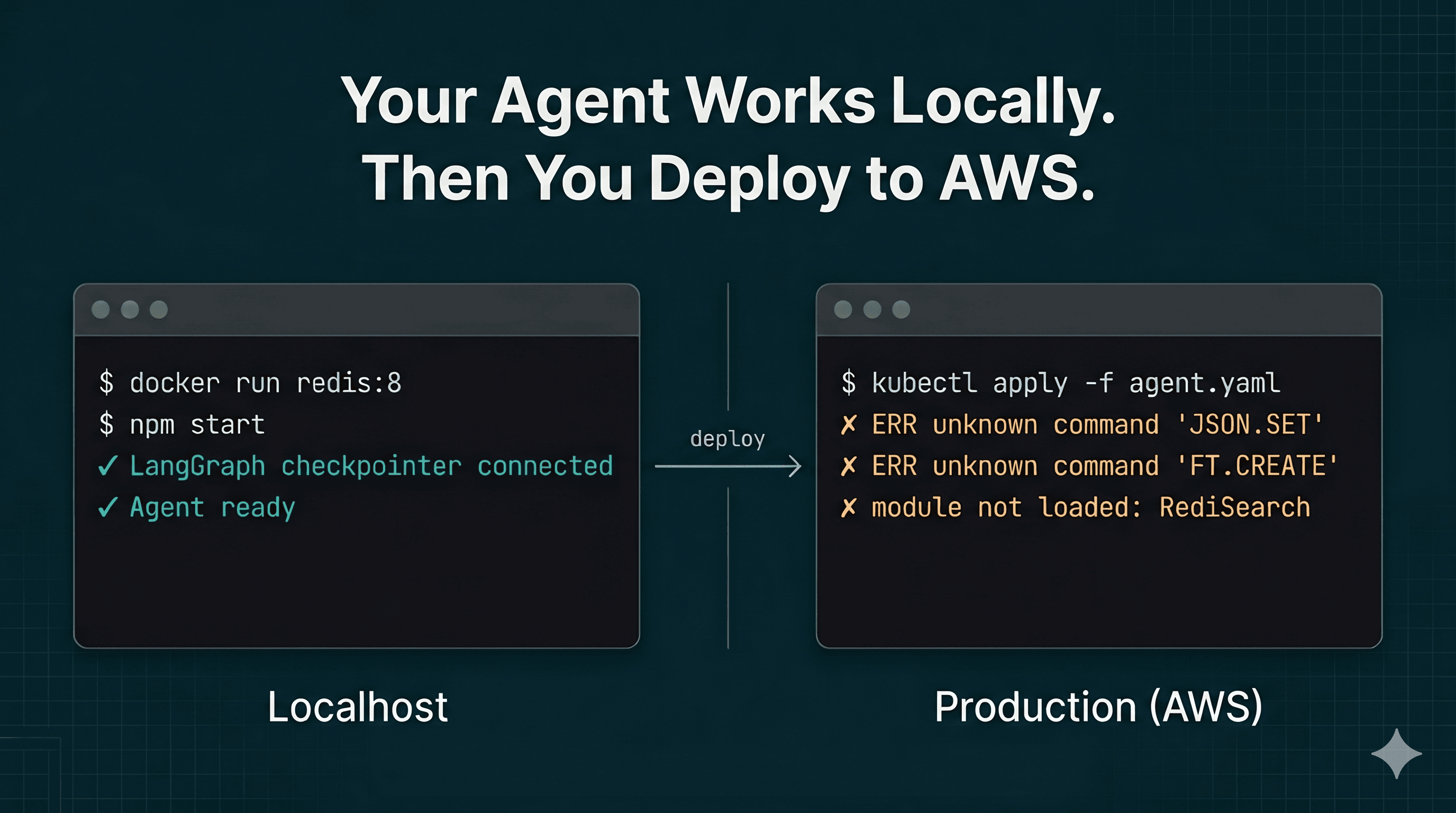

Your Agent Works Locally. Then You Deploy to AWS.

The quietest assumption in modern AI frameworks is that Redis means Redis 8 with modules. It doesn't, on any managed service you're likely to use. Here's why your agent cache breaks on deploy, and what to do about it.

You spin up a local Redis container. You pip install langgraph-checkpoint-redis or its npm equivalent. You wire up a checkpointer so your agent can resume mid-workflow after a crash. Everything works. Tests pass. You ship it to staging on AWS.

The first invocation throws. The logs say something about unknown command 'JSON.SET' or unknown command 'FT.CREATE'. You read the library source. You google the error. You learn that the checkpointer requires Redis 8 with RedisJSON and RediSearch modules loaded. You check your cache instance. Those modules are not there. They cannot be there. You cannot MODULE LOAD them yourself.

It does not matter whether you picked ElastiCache for Redis or ElastiCache for Valkey. Neither one allows custom modules, and neither one is Redis 8 in the first place. This is the point where most teams discover that the assumption baked into half the Redis-backed AI libraries is wrong for the Redis they actually run in production.

The deploy-day surprise

Local Redis comes with everything. The official redis:8 Docker image ships with JSON, Search, Bloom, TimeSeries, and a few more loaded by default. docker run redis:8 and every module command works. So your library reaches for FT.CREATE, JSON.SET, FT.SEARCH without asking, because why would it not be there?

Then the library docs quietly say "requires Redis 8+ with RedisJSON and RediSearch" and you move on.

The problem is that "Redis 8 with modules" is not a deployment target you can pick on any of the three biggest managed in-memory services, whether you chose the Redis fork or the Valkey one:

- ElastiCache for Redis tops out at Redis OSS 7.x. AWS did not adopt Redis 8, and it has never allowed custom modules. Picking "Redis" on AWS does not get you RedisJSON or RediSearch.

- ElastiCache for Valkey runs Valkey 7.2 and 8.x. It ships a native vector search that accepts

FT.CREATEandFT.SEARCH, but it is a subset of RediSearch and still does not load the RedisJSON or RediSearch modules. - MemoryDB for Redis supports Redis OSS 7.x. MemoryDB for Valkey supports Valkey 7.2 and 8.x. Neither loads modules.

- Memorystore for Valkey runs Valkey 7.2 and 8.1 with no Redis-proprietary modules. Memorystore for Redis is frozen at 7.2.

- Azure Cache for Redis Basic, Standard, and Premium tiers run Redis 7.x without modules. Only the Enterprise tiers offer RediSearch and RedisJSON, and they are a substantial price jump.

The common thread is that managed in-memory services do not let you install external modules. It is not a Valkey policy or an AWS policy. It is how all of them work. ElastiCache for Redis said so in 2023 and the answer has not changed. Memorystore for Redis says the same. Azure Cache Basic and Standard tiers say the same.

Picking "Redis" instead of "Valkey" does not fix the library. The Redis you get on managed services is not the Redis the library was written against. That Redis only exists in Docker, on machines you run yourself, or on Redis Cloud and Azure Cache Enterprise.

What "Redis 8" actually means

The version number hides two separate things. First, the fork split: Redis 8.0 ships under AGPLv3 and SSPL, Valkey 8.x stays BSD. Second, and more importantly for this post, the module bundle: Redis 8.0 folded eight previously separate modules into the core distribution, adding over 150 commands. Valkey 8.x did not. Neither did any major managed service.

A library that says "requires Redis 8" is really saying "requires the Redis Inc. distribution with its proprietary modules loaded." That is a much narrower statement than it looks. It is not satisfied by ElastiCache for Redis, ElastiCache for Valkey, MemoryDB in either flavor, Memorystore in either flavor, or Azure Cache for Redis Basic, Standard, or Premium. It is satisfied by a self-hosted Redis 8 container, by Redis Cloud, and by Azure Cache Enterprise.

The official langgraph-checkpoint-redis is the most-cited example because LangGraph is popular and the checkpointer is load-bearing, but the same pattern shows up in RedisVL, parts of LangChain's Redis integrations, and a growing number of agent memory libraries. They all assume a feature set that does not exist where most production workloads run.

The managed service reality

Here is what the big managed services actually support, regardless of which fork's name is on the box:

| Service | Engine version | Modules loadable | RedisJSON / FT.SEARCH | Native JSON / vector |

|---|---|---|---|---|

| ElastiCache for Redis | Redis OSS 7.x | No | No | Native JSON, no vector search |

| ElastiCache for Valkey | Valkey 8.x | No | No | Native JSON, native vector search (subset) |

| MemoryDB for Redis | Redis OSS 7.x | No | No | Native JSON, no vector search |

| MemoryDB for Valkey | Valkey 8.x | No | No | Native JSON, native vector search (subset) |

| Memorystore for Valkey | Valkey 7.2 / 8.1 | No | No | Native vector search |

| Azure Cache for Redis (Basic/Std/Premium) | Redis 7.x | No | No | No |

| Azure Cache for Redis Enterprise | Redis 7.x | Yes | Yes | Yes |

| Redis Cloud (paid tiers) | Redis 8 | Yes | Yes | Yes |

The important row for anyone planning an AI workload is that ElastiCache for Redis does not change the picture. It is at Redis 7, it has no modules, and it will not grow them. ElastiCache for Valkey adds a native vector search that covers some of what FT.CREATE does, but it is not the RediSearch module and it does not cover every field type a library might try to create. That is exactly why n8n's Redis Vector Store node, for example, fails on ElastiCache for Valkey when it tries to create a TEXT field the native engine does not implement.

The assumption in the library was never neutral. It quietly picks a vendor for you, and the vendor it picks is not AWS.

The options, none of them good

Once you discover the mismatch, there are three ways out.

Run Redis Stack yourself. Spin up an EC2 instance or a Kubernetes pod with redis-stack-server. Now you own the uptime, the backup strategy, the failover, the patching, the memory tuning, and the network isolation. You have traded a managed service for a bespoke one to satisfy a single library's module requirement.

Switch your managed service. Move to Redis Cloud or Azure Cache Enterprise. You pay the premium, you accept the vendor lock-in, and you hope the pricing model does not change again in a year. Your infrastructure decision is now driven by a transitive dependency of your agent framework. Notably, picking "ElastiCache for Redis" instead of "ElastiCache for Valkey" does not help you here. You still get no modules.

Drop the library. Write your own checkpointer against SET and GET. This works, and for small deployments it is genuinely the right call, but you have now taken on maintenance of an integration the framework was supposed to provide.

Each of these has a real cost. The cheapest one, by a lot, is to pick a library that does not force the choice on you.

The module-free path

This is why we built @betterdb/agent-cache with no module requirements at all. The LangGraph checkpointer stores each checkpoint as a plain JSON string under a SET key, with a pointer key for latest. Listing checkpoints uses SCAN instead of an indexed FT.SEARCH query. Session state uses individual keys with per-field TTL. Tool results and LLM responses use the same primitives. Every operation runs on vanilla Valkey 7+ and Redis 6.2+, and therefore on every managed in-memory service anyone is likely to use.

The tradeoff is real and worth naming: listing filtered checkpoints is O(n) in the number of checkpoints per thread instead of O(log n) with a RediSearch index. For a thread with hundreds or low thousands of checkpoints, the difference is not measurable. For a thread with millions, use langgraph-checkpoint-redis with Redis Stack or Redis Cloud. That is the right tool for that scale, and we are explicit about where the line falls.

Everything else about the adapter is drop-in. You get BetterDBSaver for LangGraph, a BaseCache implementation for LangChain, and a middleware for Vercel AI SDK. Same connection, same Valkey, no second database to stand up, no module licensing to audit.

import { StateGraph } from '@langchain/langgraph';

import { AgentCache } from '@betterdb/agent-cache';

import { BetterDBSaver } from '@betterdb/agent-cache/langgraph';

import Valkey from 'iovalkey';

const cache = new AgentCache({

client: new Valkey({

host: process.env.VALKEY_HOST,

port: 6379,

}),

});

const checkpointer = new BetterDBSaver({ cache });

const graph = new StateGraph({ channels: schema })

.addNode('agent', agentNode)

.compile({ checkpointer });

That runs on your laptop. It runs on ElastiCache. It runs on Memorystore. It runs on MemoryDB. It runs on Redis Cloud. The deploy-day surprise is gone.

The short version

"Redis 8 with modules" is a deployment target that does not exist on ElastiCache, MemoryDB, Memorystore, or Azure Cache Basic/Standard/Premium, whether you pick the Redis or the Valkey flavor. Libraries that assume it will silently pick a vendor for you, and you will find out at deploy time. The fix is to use primitives every Valkey and Redis supports, and accept the narrow tradeoff of SCAN-based listing over indexed queries. For nearly every agent workload, that tradeoff is invisible.

@betterdb/agent-cache is MIT-licensed, covers LangChain, LangGraph, and Vercel AI SDK, and ships with OpenTelemetry spans and Prometheus metrics on every operation. It is on npm, the source is on GitHub, and a Python port is next.

If you have already hit this wall, I would like to hear about it. If you have not, consider this a preview.