Valkey's Moment: Why AI Inference Needs What Postgres Can't Deliver

Why the database that was 'just a cache' might be the most important piece of your AI infrastructure

Why the database that was "just a cache" might be the most important piece of your AI infrastructure

Let's face it

Postgres is having the best decade any database has ever had. And it deserves it.

pgvector turned it into a vector database. Extensions keep expanding what it can do. The ecosystem is thriving. Every week there's a new blog post about how Postgres is the only database you'll ever need. And honestly? For a lot of workloads, they're right.

But here's the thing that's been bugging me: somewhere in all the Postgres hype, people started assuming that what works for storage and retrieval also works for inference-time operations. That because Postgres can store your embeddings and search your vectors, it can also serve as the backbone of your AI inference pipeline.

It can't. And the reason is architectural - not something you can fix with an extension.

The uncomfortable truth about latency

Let me be direct: when we talk about AI inference at scale, we're not talking about "query your vector store and return the top 10 results." We're talking about the hot path - the operations that happen on every single inference call, thousands of times per second, where every millisecond compounds.

There are three places where in-memory data stores like Valkey have become critical infrastructure for AI:

Semantic caching - storing LLM embeddings so you don't make redundant calls to expensive foundational models. Every cache hit saves a GPU cycle. At scale, that translates to millions of dollars annually.

KV cache management - the key-value cache that transformer models generate during inference is massive and needs to be stored, shared, and retrieved across distributed inference engines. Valkey is already integrated as a storage backend in LMCache, the most widely adopted open-source KV caching layer for vLLM and SGLang.

Session and context state - multi-turn conversations, agentic workflows, tool call results, retrieval context - all of it needs to be stored and accessed at inference speed.

In every single one of these cases, the requirement isn't "return results within 100ms." It's "return results in microseconds, consistently, under load, without tail latency surprises."

And that's where the architectural divide becomes a canyon.

The Postgres problem (that isn't Postgres's fault)

Let me be clear - I'm not bashing Postgres. I've used it extensively (BetterDB Cloud runs on RDS Postgres for our tenant data). Postgres is exceptional at what it's designed to do.

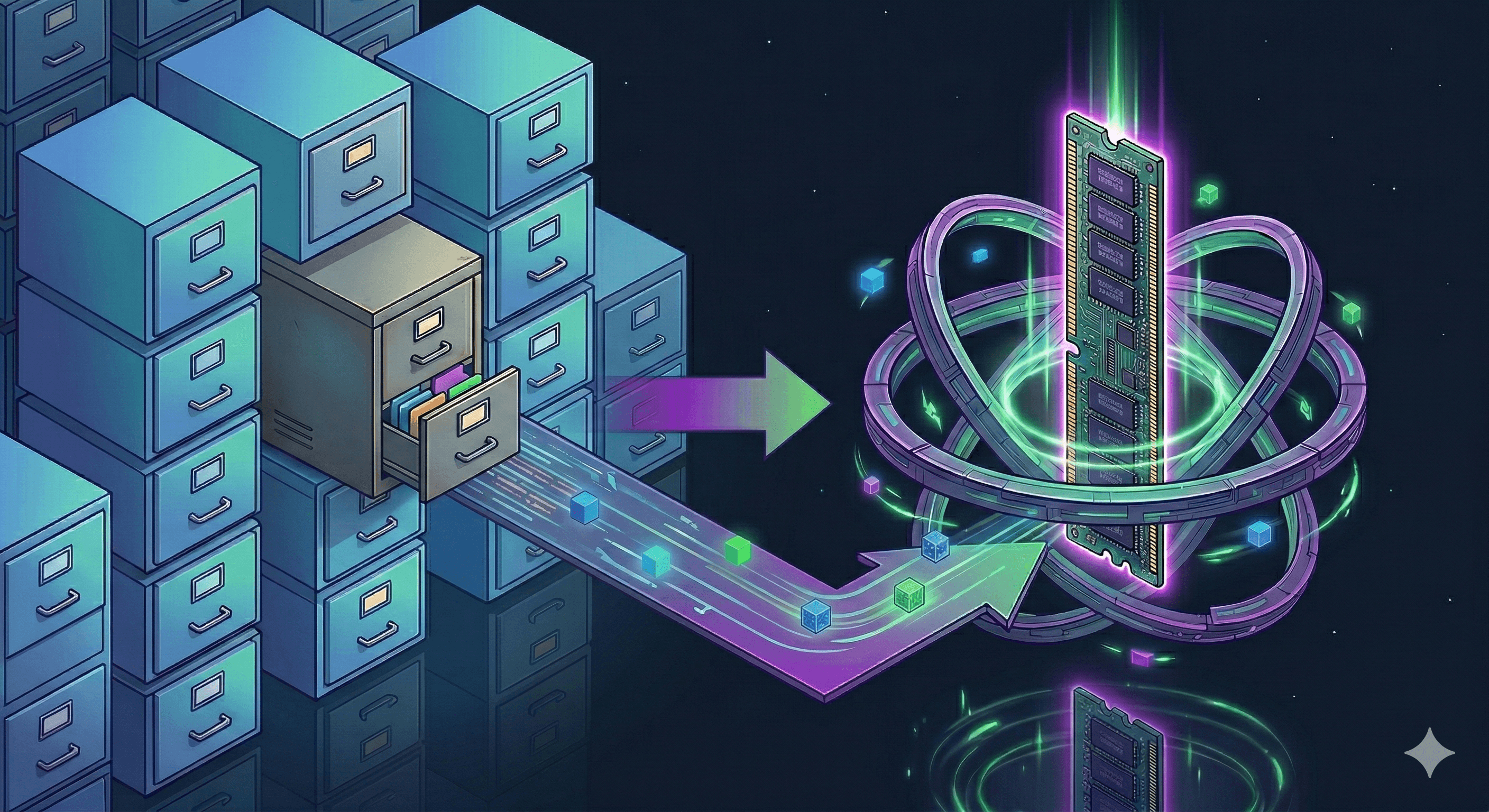

But here's what it's designed to do: store data on disk and use memory as a cache for that disk-based data.

pgvector has made incredible progress. Version 0.8.0 brought up to 9x faster query processing for certain patterns. HNSW indexes deliver solid approximate nearest neighbor search. For RAG pipelines where sub-100ms latency is acceptable and your dataset is under 10 million vectors, pgvector is a perfectly reasonable choice.

But "perfectly reasonable" is not what AI inference at scale needs. AI inference at scale needs:

Sub-millisecond latency, consistently. Valkey operates entirely in memory. There is no disk to page from, no buffer pool to miss, no I/O wait hiding in your P99. When Madelyn Olson (who leads Valkey development at AWS) describes why Valkey matters for AI, she makes it simple: by keeping the entire vector dataset in RAM, Valkey avoids the disk-lookup latency inherent in disk-based solutions like pgvector.

Predictable tail latency under load. This is where Postgres really struggles for inference workloads. pgvector benchmarks show P95 latencies that can spike dramatically under concurrent load - we're talking seconds, not milliseconds. When your HNSW index exceeds working memory and starts paging to disk, latency doesn't degrade gracefully - it falls off a cliff. That's fine for a batch analytics query. It's catastrophic for real-time inference.

Simple, blazing-fast reads and writes. AI inference operations are predominantly key-value patterns: GET the cached embedding, SET the new KV cache block, check if this prefix exists. These are exactly the operations that the Redis/Valkey lineage has been architecturally optimized for over 15 years of development. Postgres can do key-value operations, sure - the same way a Swiss Army knife can open a bottle of wine. You can, but there's a better tool.

Why AI inference is Valkey's reinvigoration moment

Here's the provocative part.

For the last couple of years, the narrative around in-memory data stores has been defensive. Postgres keeps gaining ground. The general vibe was "do we even need a separate cache layer?"

AI inference flips that script entirely.

The explosion of LLM workloads has created a class of problems where microsecond-level latency isn't a nice-to-have - it's the entire point. Consider what's happening in the vLLM ecosystem alone: KV cache has become so critical that there's now an entire open-source project (LMCache) dedicated to efficiently managing it across GPU, CPU, and external storage. And guess which external storage backends are supported? Valkey, Redis, and... not Postgres.

That's not an oversight. It's an architectural recognition.

The numbers tell the story. When Kove ran benchmarks for AI inference workloads, they chose Redis and Valkey - because that's what the inference engines are actually using. When AWS built MemoryDB for persistent in-memory workloads, they built it on the Valkey protocol. When vLLM needs to offload KV cache beyond GPU memory, the hierarchy goes GPU → CPU → Valkey. Not GPU → CPU → Postgres.

Valkey and Redis have always been faster than Postgres for key-value operations. That was never in dispute. What's changed is that an entire category of workloads has emerged where that speed difference isn't a 2x improvement on your API response time - it's the difference between your inference pipeline working and not working.

But wait, doesn't Postgres have vectors now?

Yes. And Valkey has vectors too (Valkey Search supports vector similarity search as of 8.1). The difference isn't capability - it's architecture.

Think of it this way: Postgres added vector search because it makes sense to query embeddings alongside your relational data. You want to find similar products? Sure, join your vector search with your inventory table. That's a great use case for pgvector.

But inference-time caching doesn't need joins. It doesn't need ACID transactions. It doesn't need relational integrity. What it needs is: put this blob in memory, get it back as fast as physically possible, and do it a hundred thousand times per second without breaking a sweat.

Valkey doesn't have to work around a disk-first architecture to do this. It doesn't have to hope the buffer pool is warm. It doesn't have to manage WAL overhead on writes. It just... does the thing. In memory. At wire speed.

The persistent storage plot twist

One of the arguments against in-memory stores has always been: "but what about durability?" Fair point. If your data disappears on restart, that's a problem.

Except Valkey (and the cloud services built on it) have solved this. AWS MemoryDB gives you in-memory performance with full durability. Valkey itself supports AOF persistence and RDB snapshots. And both Valkey and Redis are developing persistent storage layers that intelligently auto-balance data between memory and persistent storage based on access patterns.

So the "it's just a cache, it'll lose your data" argument doesn't hold anymore. You can have in-memory speed AND persistence. The question becomes: why would you add disk-level latency to your inference pipeline when you don't have to?

What this means practically

If you're building AI applications today, the architecture that's emerging looks like this:

Postgres for your relational data, user accounts, metadata, application state - everything it's always been great at. Use pgvector for your batch vector search, RAG retrieval where sub-100ms is fine, and anywhere you benefit from joining vectors with relational data.

Valkey for everything on the inference hot path - semantic caching, KV cache storage, session state, rate limiting, real-time feature stores, and anywhere microsecond latency matters. This is where Valkey isn't replacing Postgres. It's doing something Postgres architecturally cannot.

They're complementary. Not competing. But every architecture that treats the caching layer as optional is making a bet that latency doesn't matter. AI inference is the workload where that bet loses.

The bottom line

Postgres is an incredible database that keeps getting better. Nothing I've written changes that.

But AI inference has created the first truly new, truly massive workload category in years where in-memory architecture isn't a nice optimization - it's a hard requirement. And that workload is growing exponentially.

For Valkey, this isn't about defending its relevance. It's about the world finally building the exact type of applications that an in-memory, microsecond-latency data store was designed for all along. The AI inference wave isn't a threat to Valkey - it's the reason Valkey exists.

Postgres gained ground because the world needed flexible, extensible data storage. Valkey's moment is happening because the world now needs something Postgres was never built to provide: memory-speed operations on the hottest path in modern computing.

And honestly? It's about time.